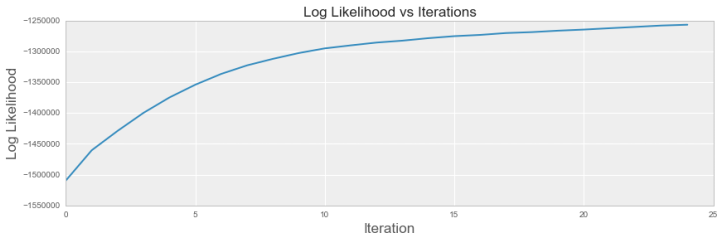

We verify that the likelihood that our model generated the data increases over ever iteration. For convergence, we want to see a plateau, such that we are seeing diminishing gains in our log likelihood. We can see in the graph below that our log likelihood converges asymptotically at around 10-15 iterations.

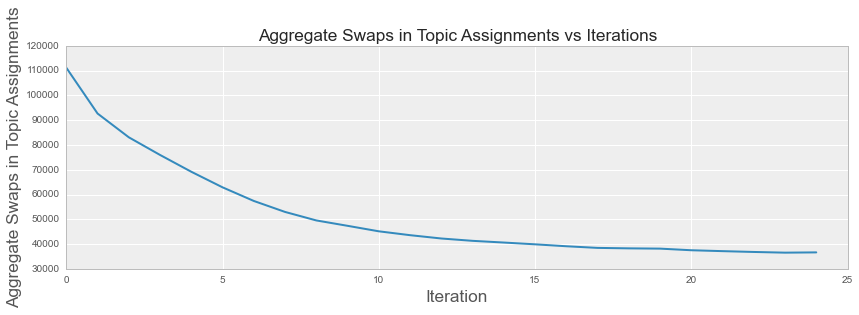

We present a custom statistic to measure the total number of words whose topic assignment changed between iterations. We know that if the algorithm converges, the number of swaps every iteration should level out. The graph above illustrates this trend.

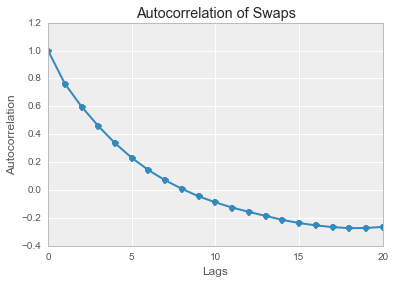

We also verify that the autocorrelation of the number of swaps decreases with a larger number of lags